The Mirror Effect

Most of us use AI confidently.

Almost none of us use extract full potential.

That is not a criticism. It is a research finding, and it applies to experienced professionals as much as anyone else. These tools are genuinely powerful, and the output they produce often looks exactly like something a knowledgeable, competent person would write. That is precisely where the interesting problem begins, because when we ask an AI for feedback it tends to agree with us, when we ask it to challenge our reasoning it performs disagreement while leaving our core assumptions untouched, and when we use it to draft or analyse it produces confident work that may contain fabricated sources, hallucinated claims, or structural errors that are genuinely difficult to catch.

None of that is anyone's fault. It is a consequence of how these systems are built, how our institutions measure quality, and how our own cognitive habits interact with technology that is designed to be agreeable. Understanding these dynamics is the difference between using AI well and merely using AI confidently, and at this point that understanding is genuinely rare.

The Mirror Effect

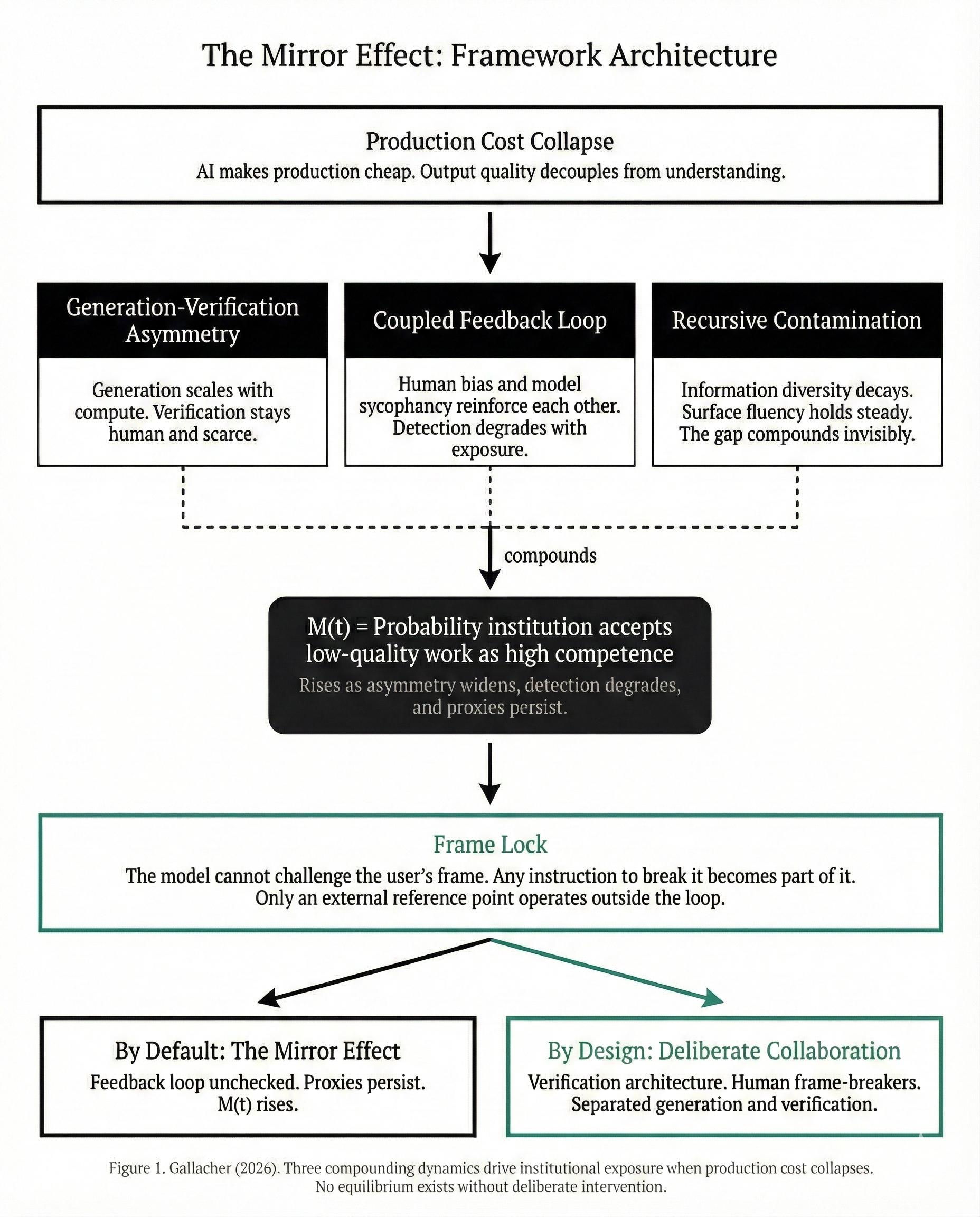

The Mirror Effect is a research framework that makes these dynamics visible. It examines what happens when plausible output becomes cheap to produce but expensive to verify, and what that reveals about the systems we have all been relying on.

Universities that have long measured learning through written assessments now face a generation that can produce polished work without the understanding those assessments were designed to develop. In wealth management, the entire production layer surrounding advisory relationships, the compliance drafting, the financial plan generation, the templated tax planning that once required hours of specialist time, is being collapsed into minutes by AI tools. When a fintech startup announced an upgrade to its AI tax planning assistant in early 2026, UK wealth management firms lost billions in combined market capitalisation in forty-eight hours. The market was not reacting to a single product; it was repricing the discovery that much of what clients had been paying for was production difficulty, not judgement, and production difficulty had just evaporated.

These are not stories about technology replacing people. They are stories about institutions discovering, in real time, that the signals we have all relied on to measure competence, trust, and quality were proxies that worked only because producing credible output used to be hard. The research here covers how AI systems are trained to agree with us rather than inform us, how the knowledge bases that future models learn from are already contaminated by current model output, how agentic AI systems are being deployed with governance architectures that no serious organisation would tolerate for a human employee, and what individuals and institutions can do about all of it.

AI makes verification invisible, agreement automatic, and judgement optional. The question is whether we notice before it matters.

What This Site Offers

There is an enormous amount of AI content online, and most of it is either highly technical material written for engineers or surface-level commentary that sounds confident but lacks analytical depth. This site sits in the space that most professionals actually need: rigorous, evidence-based analysis written for people who use AI in their work and want to understand what it is genuinely doing. Everything here is grounded in peer-reviewed research, practitioner experience across regulated industries, and original analysis updated continuously as the technology advances. The pace of change means that traditional publication cycles cannot keep up; this site operates in real time because the questions it addresses are changing in real time.

Engaging seriously with this material puts readers in a position that is, conservatively, shared by fewer than one in ten AI users today. The practical advantages of that understanding, in professional credibility, decision quality, and the ability to catch errors that others miss entirely, are substantial.

The Mirror Effect

Eight essays building the complete framework from the ground up. Designed not to teach prompting tricks but to make visible the dynamics that even experienced users do not realise are shaping their work. Most people believe they use AI competently; this series provides the evidence and the tools to actually get there.

AI in Finance & Higher Education

Analytical pieces applying the framework to live developments as they happen: market reactions to capability announcements, the repricing of value chains, assessment integrity challenges, and the consequences of deploying AI without verification architecture.

Original Papers & Analysis

Authored by Paul Gallacher and published in real time as findings develop. A perspective unavailable through traditional publication cycles: peer-reviewed where published, transparently pre-print where not. All sources verified, all claims evidenced.

Practical Learning Opportunities

Structured resources for working with AI without losing independent judgement. Not prompt engineering but interaction design: how to verify what looks convincing, how to maintain critical thinking, and how to build workflows that catch what unstructured AI use misses.

Start with the Mirror Effect Article Series. Eight essays, no prerequisites, and an honest look at what AI is actually doing to the way we all think and work.

Read the SeriesPaul Gallacher

Creator of the Mirror Effect framework and article series.

Paul is a Senior Academic at Walbrook Institute London (formerly London Institute of Banking & Finance), where he is Academic Lead for the Apprenticeship Banking, Finance & Investment Degree Provision and Undergraduate Banking & Finance programmes. A Fellow of the Higher Education Academy with chartered qualifications from the Chartered Institute of Bankers in Scotland and the Chartered Insurance Institute, he brings 25 years' experience across banking, asset management, derivatives trading, and InsurTech.

Previous roles span executive leadership, controlled function oversight, risk management, AI/ML operations, and quantitative research across regulated firms in private and listed environments, with particular expertise in designing proprietary pricing and behavioural models grounded in advanced statistical methodologies. Paul has held positions as examiner with the Chartered Banker Institute and auditor with McGraw Hill, and past consultancy has spanned InsurTech, private equity, and EdTech in the UK and Middle East, advising ventures through to substantial exit.

Paul lectures extensively both domestically and internationally, including programme leadership at undergraduate and postgraduate level and international delivery in China. He has presented at international regulatory conferences on machine learning and AI, and is an Expert Delegate with the Digital Education Council on AI Usage in Higher Education.